The Return to Documentation Discipline

March 12, 2026 · 11 min read

For a while, I have been thinking about why working with AI agents feels strangely familiar.

Not familiar in the sense that the tools are mature. They are not. The ground keeps moving. Models improve, regress, hallucinate, surprise, and demand that you rethink your process every few days. But familiar in a deeper way: the kind of thinking that makes this work actually work feels very close to the kind of UX practice many of us learned years ago.

That has been one of the most interesting realizations for me.

A lot of the conversation around AI and design has focused on speed, automation, and role compression. Some of it has been energizing. Some of it has been alarmist. Much of it has been thin. What I have experienced instead is something more specific and, to me, more useful: a return to documentation discipline.

I do not mean a return to bloated deliverables or process for process’s sake. I mean the ability to think clearly, structure a problem, define intent precisely, communicate constraints, and evaluate output against a standard. In other words: the discipline many generalist UX practitioners had to build when the work demanded research, information architecture, prototyping, specification writing, and testing all in relation to one another.

The audience for the specification has changed. The discipline has not.

Why this feels like a return, not a rupture

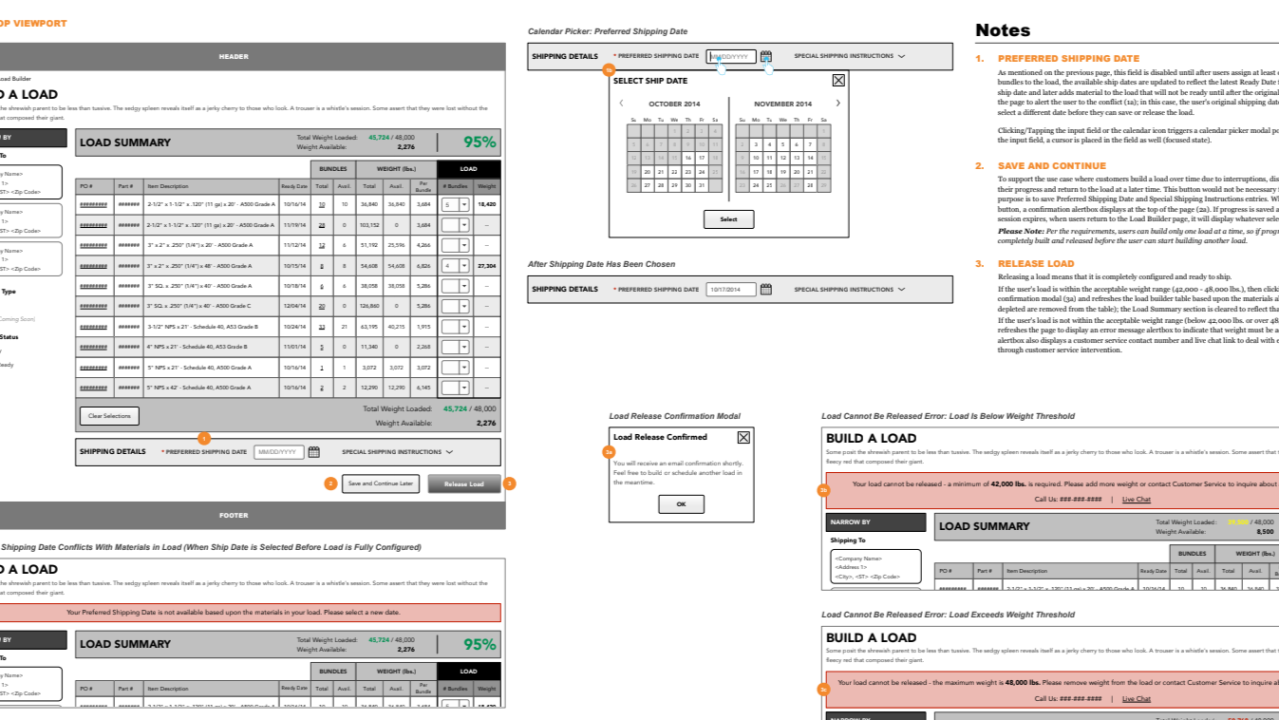

Earlier in my career, a meaningful part of design work involved creating annotated wireframes, functional requirements, interaction notes, content structures, and handoff documents that helped engineering teams understand not just what to build, but why it needed to work a certain way. The best versions of those artifacts were never decorative. They were thinking tools. They reduced ambiguity. They translated intention into action.

In many organizations, UX later fragmented into specialist roles. Research became one lane. Information architecture another. Visual design another. Then “product design” recompressed many of these responsibilities, often with a stronger emphasis on UI execution and a lighter emphasis on specification rigor, structured documentation, and explicit reasoning.

That shift made sense in context. Teams wanted speed. Design systems matured. Agile rituals tightened. Shipping became the dominant verb.

But AI has a funny way of resurfacing the skills we quietly devalued.

When you work with an AI agent to generate code, content, components, or workflows, you quickly learn that vague prompts produce vague outcomes. Loose thinking shows up in the output. Missing structure becomes visible. Contradictions multiply. And when the system fails, it often fails in ways that point right back to the quality of the brief.

That is why this moment feels less like the death of design to me and more like a re-centering of some of its most foundational disciplines.

The generalist advantage

I want to be careful here, because “UX” is a broad umbrella. Not every background translates in the same way.

The pattern I keep seeing is that generalist practitioners may be more prepared for this shift than they realize. By generalist, I mean the person who can move across the full arc of problem framing and solution shaping: understanding user needs, structuring information, modeling flows, defining requirements, prototyping interactions, and evaluating whether the output actually serves the intended job. That range matters.

It matters because working effectively with AI is not just about asking for something clever. It is about building a usable context window.

You need to know:

- what problem is being solved

- for whom

- under what constraints

- in what sequence

- with what dependencies

- against what standards of quality.

That is not just prompting. That is design thinking made concrete.

And for designers who have been doing this work for 15 or 20 years, there may be more transferable experience here than current discourse suggests. Many of us were trained, formally or informally, to think in systems, to write for implementation, to anticipate ambiguity, and to document intent with precision. Those are not obsolete skills. They are suddenly very relevant again.

The skills AI seems to reward most

From my own experience, a few capabilities have become even more important in AI-augmented workflows.

- Writing: This one is obvious, but it is still underestimated. The clearer the writing, the better the output tends to be. That was true when communicating with engineering teams. It is true when communicating with AI agents. Precision matters. Order matters. Naming matters. Tone matters. The difference between an acceptable result and a strong one often comes down to how well intent is articulated.

- Information architecture: A good specification is not just accurate. It is organized. AI systems respond better when context is structured in ways that make priorities legible: goals, constraints, content hierarchy, states, edge cases, dependencies, examples. This is information architecture in practice. We are not only designing interfaces; we are designing the shape of the instructions that produce them.

- Systems thinking: AI can generate a lot very quickly. That is part of the appeal and part of the danger. Without an underlying system, you can end up with fast-moving inconsistency: components that almost match, flows that almost align, copy that almost reflects the brand, patterns that almost scale. Systems are what keep the work coherent. Tokens, naming conventions, component rules, design governance, and clear standards become more valuable, not less, when output can be produced at high velocity.

- Evaluative judgment: The work does not stop when the AI produces something. Someone still has to assess whether the output is useful, usable, on-brand, internally consistent, and fit for purpose. Someone still has to say, “This is close, but it fails in three important ways,” and then explain those ways clearly enough to improve the next iteration. That judgment is a design skill. It is not replaced by generation.

Accountability does not move

This may be the most important point.

AI can take on significant delivery responsibilities. It can write code, draft content, generate components, summarize research, and propose structures. But accountability for experience quality does not move to the machine.

At least in my own work, that accountability still sits very squarely with the human. That means the job changes, but it does not disappear. I spend more time reviewing, redirecting, refining, and stress-testing than I used to. In some cases, I spend more time wrangling the agent than I would spend aligning with a strong human collaborator. That is part of the truth too.

I do not think we do ourselves any favors by pretending this is frictionless. There are moments when the tools feel astonishing. There are also moments when they feel like an overconfident intern with infinite stamina and selective listening.

Both things can be true.

What changed for me in practice

The familiar parts transferred quickly. The calibration did not.

The broad discipline of specification writing came back almost immediately: define the problem, organize the context, articulate the desired outcome, specify the constraints, review the output, refine the instruction. That pattern felt deeply recognizable.

What required adjustment was scale, pacing, and format.

Early on, I often wrote specs that were too long, too dense, or insufficiently structured for the model and task at hand. I had to learn how to break work into cleaner parts, how to front-load the right context, how to manage tradeoffs between completeness and clarity, and how to decide what belonged in a system-level document versus a task-specific one.

That learning curve was humbling and useful.

It also made one thing very clear: good specifications describe a system clearly, but they do not guarantee the system itself is right. I have had to rebuild methodology, revisit assumptions, and rethink architecture more than once. Better documentation improves the odds of good output. It does not remove the need for judgment.

Why minimum viable experiments matter

One shift that has helped me a great deal is moving more deliberately toward minimum viable experiments.

Instead of committing too early to a single approach, I have found it far more effective to test quickly, learn quickly, and preserve time and token budget for what proves promising. That has meant trying one path, seeing where it breaks, and pivoting without romantic attachment.

In practice, that kind of experimentation has led to meaningful course corrections. Sometimes the right answer was not “push harder” but “change the approach entirely.”

That mindset matters because AI workflows still sit on unstable ground. Tools improve rapidly, but they also change rapidly. Methods that seemed smart a month ago may already be dated. The willingness to test, discard, and reframe is not a lack of rigor. In this environment, it is part of rigor.

Adversarial review has become one of my favorite disciplines

Another practice I have come to value more is adversarial review.

I increasingly run important artifacts through critique before I consider them finished: specifications, articles, methodologies, interface structures, code. I want the weak points exposed early. I want the hidden assumptions challenged. I want the thing argued with before it reaches the world.

AI can be genuinely useful here. Not because it is always right, and certainly not because it has taste by default, but because it is willing to push on the logic, surface inconsistencies, and help pressure-test an idea from multiple angles. When used well, that can strengthen the work considerably.

There is something oddly comforting about this. For all the novelty of the tooling, one of the best uses of it is profoundly old-fashioned: revision.

This is still a frontier, so a little honesty helps

I do not think enough high-quality content exists yet about how to work this way, especially once the work becomes more complex.

There is no shortage of enthusiasm. There is no shortage of tutorials. There is no shortage of sweeping proclamations about the end of one role and the rise of another. But there is still a shortage of grounded writing about what this feels like in lived practice when you are trying to build something substantial, coherent, and durable.

That is part of why I wanted to share these reflections.

Not as a declaration that I have solved it. I have not. Some of the methodology is still evolving. Some of the architecture is still being worked through. Some days the work feels thrilling; some days it feels like building a ship while the ocean keeps changing its laws.

But even with all of that uncertainty, one pattern feels increasingly solid to me: disciplined thinking is becoming more visible again.

The people who can frame problems well, write clearly, structure context, define systems, and judge quality are not becoming less relevant. They may be becoming more essential.

An invitation to experienced practitioners

If you have been in UX, product design, content design, research, or adjacent disciplines for a long time and feel a little disoriented by the AI conversation, I would offer this encouragement:

You may be more prepared than you think. Not because tenure automatically confers wisdom. It does not. And not because every legacy practice deserves revival. Many do not. But because some of the quieter disciplines many experienced practitioners developed over time, especially around problem framing, documentation, structure, and evaluative judgment, turn out to matter a great deal here.

Your wireframe annotations may have been training for this. Your handoff notes may have been training for this. Your annoying insistence on naming things properly, clarifying states, questioning assumptions, and documenting edge cases may have been training for this.

That does not mean the transition is easy. It does mean the past may be more useful than it looks.

Opening the conversation

I am sharing this less to make a grand claim than to open up a more grounded conversation.

I am interested in how others are experiencing this shift, especially people whose work has always involved some combination of research, architecture, systems thinking, writing, and design judgment.

What skills from earlier phases of your career feel newly relevant?

What documentation habits have become more important, not less?

And where do you feel the friction between speed and rigor most acutely?

I suspect many of us are relearning, in different ways, that good design was never only about the artifact. It was also about the clarity of thought required to make the artifact worthy of trust.

Loading comments...